1234

5678

// welcome

Detroit

Developers

Developers

monthly meetup

detroitdevelopers.com

⑂ main

● welcome.md

1 / 42

Detroit, MI

1234

5678

// thank you to our sponsor

RIVET

Construction Labor Planning for increased Productivity

We're hiring →

rivet.work/careers

rivet.work/careers

⑂ main

● sponsors.md

2 / 42

rivet.work

1234

5678

// your organizers

Phil Borel

Organizer

philborel.com

detroitdevelopers.com

Louis Gelinas

Organizer

linkedin.com/in/louis-gelinas

⑂ main

● organizers.md

3 / 42

Detroit, MI

1234

5678

// intro

Agentic Software

Development

Development

Advanced Practitioner's Guide

Phil Borel · RIVET · Detroit Developers · April 2026

⑂ main

● title.md

4 / 42

Detroit, MI

1234

5678

// intro

"A working case study.

Not a success story.

Yet."

Not a success story.

Yet."

⑂ main

● framing.md

5 / 42

Detroit, MI

1234

5678

// intro

What does 3x even mean?

- Vibes — execs feel like we shipped 3x the value

- Metrics — DORA numbers, PR throughput, features shipped

- Outcomes — users get more value more quickly

- All three matter. None is sufficient alone.

- Risk: 10x some metrics while seeing modest gains on outcomes

⑂ main

● what-is-3x.md

6 / 42

Detroit, MI

1234

5678

// intro

AI compresses the 20%. The hard part is the 80%.

- Writing code was always 20% of the work

- Testing, refining, validating — always 80%

- 10x on 20% doesn't get you to 3x overall

- This talk is about the 80%: spec quality, review process, architectural discipline

⑂ main

● the-20-percent.md

7 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

Act 1

AI is an

Amplifier

Amplifier

⑂ main

● act-1.md

8 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

AI is an amplifier.

- DORA 2025 — 5,000 professionals, 100+ hours qualitative research

- Magnifies the strengths of high-performing orgs

- Magnifies the dysfunctions of struggling ones

- 92% of devs use AI monthly — but outcomes are splitting

- High-performing orgs: 50% fewer customer incidents. Struggling orgs: 2× more. Same tools.

- "AI is moving organizations in different directions." — Laura Tacho, DX

⑂ main

● dora.md

9 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

What is AI about to amplify at your org?

- Good architecture → great architecture

- Bad architecture → messy, sprawling code generated at scale

- Good specs → accurate, testable implementations

- Vague specs → confidently wrong code that passes tests you didn't write

- Consistent patterns → idiomatic output on the first try

- Three divergent patterns → agent picks whichever it saw last, tech debt at speed

⑂ main

● amplifier.md

10 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

The velocity gains were temporary. The tech debt was permanent.

- CMU — 807 repos after Cursor adoption

- 281% increase in lines added, month one

- Month two: velocity gains gone, back to baseline

- Static analysis warnings +30%, code complexity +41% — permanently

- Complexity increase → ~65% drop in future velocity

- "Slop creep" — Boris Tane: individually reasonable, collectively destructive

⑂ main

● cautionary-data.md

11 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

Taste × Discipline × Leverage — multiplicative, not additive

- Taste — when generation is free, knowing what's worth generating is the scarce skill

- Discipline — specs before prompts, tests before shipping, reviews before merging

- Teams skipping discipline are reporting production disasters — Cortex 2026: incidents/PR up 23.5%

- Leverage — small teams, stacked PRs, agent orchestration, design engineers eliminating handoffs

⑂ main

● framework.md

12 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

This isn't theoretical.

- Amazon retail: spike in outages from AI-assisted changes → senior sign-off mandate for junior/mid engineers

- Anthropic's own website shipped a basic regression affecting every paying customer — 80%+ generated with Claude Code

- "I don't think we're even trading this off to move faster. I think we're moving at a normal pace." — OpenCode CEO

- Discipline is what separates teams that sustain from teams that crash

⑂ main

● reality-check.md

13 / 42

Detroit, MI

1234

5678

// act-1: ai is an amplifier

Best practices matter more with AI, not less.

⑂ main

● implication.md

14 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

Act 2

Code Quality is

Harness Quality

Harness Quality

⑂ main

● act-2.md

15 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

The bottleneck is the environment, not the model.

- OpenAI harness engineering: 3 engineers (later 7), ~1M lines, 1,500 PRs, zero hand-written code

- When the agent fails: "what capability is missing, and how do we make it legible and enforceable?"

- Failures are harness problems, not prompt problems

⑂ main

● environment.md

16 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

Refactoring is AI infrastructure — not cleanup.

⑂ main

● refactoring.md

17 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

RIVET: backend layered architecture

- Three divergent patterns → one: DB → Model → Repository → Service → Controller → Router

- Each layer: one clear responsibility, one clear contract with adjacent layers

- Defense-in-depth permissions at router, controller, and service levels

- Agent can't accidentally create an unprotected endpoint — the pattern enforces security

- Maps closely to OpenAI's enforced architecture. Not a coincidence.

⑂ main

● backend.md

18 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

RIVET: frontend state migration

- Legacy: load full app state upfront, apply incremental updates

- Problem: sluggish loads, global state — hard for humans and agents

- New: fetch data when and where needed, local component state, explicit dependencies

- Smaller blast radius per change — agent can work in one area without breaking adjacent ones

- Two goals: better UX for users + a codebase Claude can work in safely

⑂ main

● frontend.md

19 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

Mechanical enforcement > documentation

- OpenAI: encoded architectural rules as custom linters, not prose

- Linter error messages written as remediation instructions — every violation teaches the agent how to fix it

- Rules that feel heavy in human-first workflows become multipliers with agents — apply everywhere at once

- Documentation drifts. Lint rules don't.

⑂ main

● enforcement.md

20 / 42

Detroit, MI

1234

5678

// act-2: code quality is harness quality

Rules without refactoring are aspirations, not enforcement.

⑂ main

● caveat.md

21 / 42

Detroit, MI

1234

5678

// act-3: context engineering

Act 3

Context

Engineering

Engineering

⑂ main

● act-3.md

22 / 42

Detroit, MI

1234

5678

// act-3: context engineering

From prompting to context engineering

- Prompting — telling an AI what to do in a single interaction

- Context engineering — building the persistent, structured context that makes an agent reliably useful over time, across a whole team

- CLAUDE.md is where this starts — but the real harness extends into linters, tests, CI gates, observability, and automated maintenance

⑂ main

● context-engineering.md

23 / 42

Detroit, MI

1234

5678

// act-3: context engineering

CLAUDE.md: map, not encyclopedia

- A large instruction file actively degrades agent performance — crowds out the task

- When everything is "important," agents fall back to local pattern-matching

- ~100 lines max — structured as a map with pointers to deeper sources of truth

- Checked into the repo. Reviewed in PRs. Evolved alongside the codebase.

- Team artifact, not personal config

⑂ main

● claude-md.md

24 / 42

Detroit, MI

1234

5678

// act-3: context engineering

Rules and hooks

- Rules — constraints the agent follows automatically: coding standards, naming patterns, always/never

- Hooks — automated actions triggered by agent behavior: linters after generation, auto-formatting

- Together: continuous mechanical enforcement, everywhere at once, without human attention

- At RIVET: rules in progress, hooks on the roadmap

⑂ main

● rules-hooks.md

25 / 42

Detroit, MI

1234

5678

// act-3: context engineering

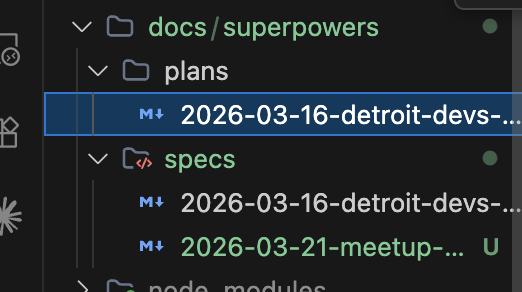

PILRs: context that accumulates

- Persistent Indexed Learning Repos — structured knowledge bases the agent can reference

- index.json maps topics → documentation files. Agent loads what it needs, not everything.

- PILRs grow as the agent works — organic accumulation vs. upfront documentation

- Goal: "generic coding assistant" → "specialist who knows how our platform is built"

- Addresses the cold-start problem. You don't have to document everything upfront.

⑂ main

● pilrs.md

26 / 42

Detroit, MI

1234

5678

// act-3: context engineering

github.com/obra/superpowers

⑂ main

● pilrs-examples.md

27 / 42

Detroit, MI

1234

5678

// act-3: context engineering

PILRs at Signal Advisors

- MCP server loads PRDs & tech specs as context for agents working across multiple code repos

- Agents get the right domain knowledge without it living in any single repo

- Same pattern, different delivery mechanism — the PILR principle scales beyond local files

⑂ main

● pilrs-signal.md

28 / 42

Detroit, MI

1234

5678

// act-3: context engineering

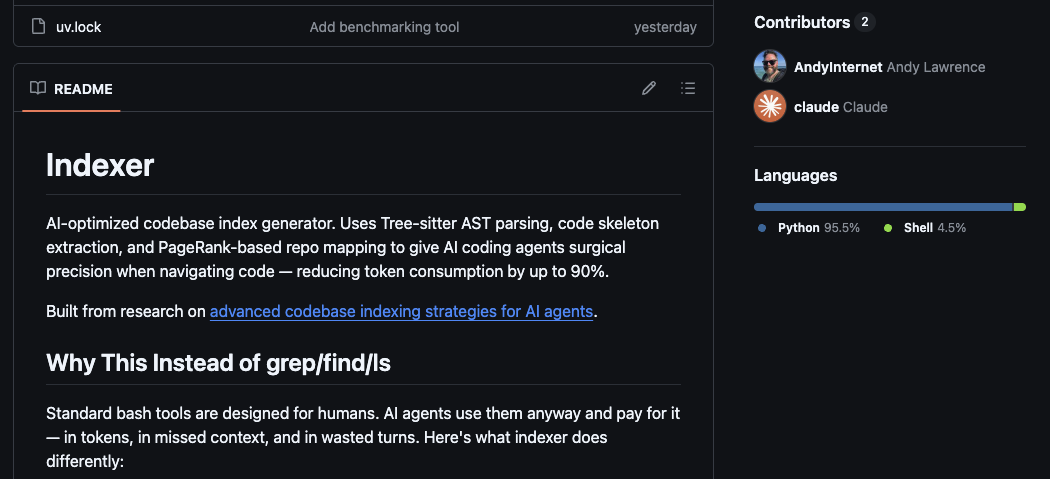

github.com/AndyInternet/indexer

⑂ main

● pilrs-indexer.md

29 / 42

Detroit, MI

1234

5678

// act-3: context engineering

Skills: making agent usage programmable

- Reusable packaged workflows — complex multi-step processes become a single command

- At RIVET: /review-pr, /implement, /update-project-docs, bug-fix skills, PILR builders, commit rewriter

- Skills are team assets: versioned, shared, improved over time

- This is where the promises of GenAI for coding start to make real sense

⑂ main

● skills.md

30 / 42

Detroit, MI

1234

5678

// act-3: context engineering

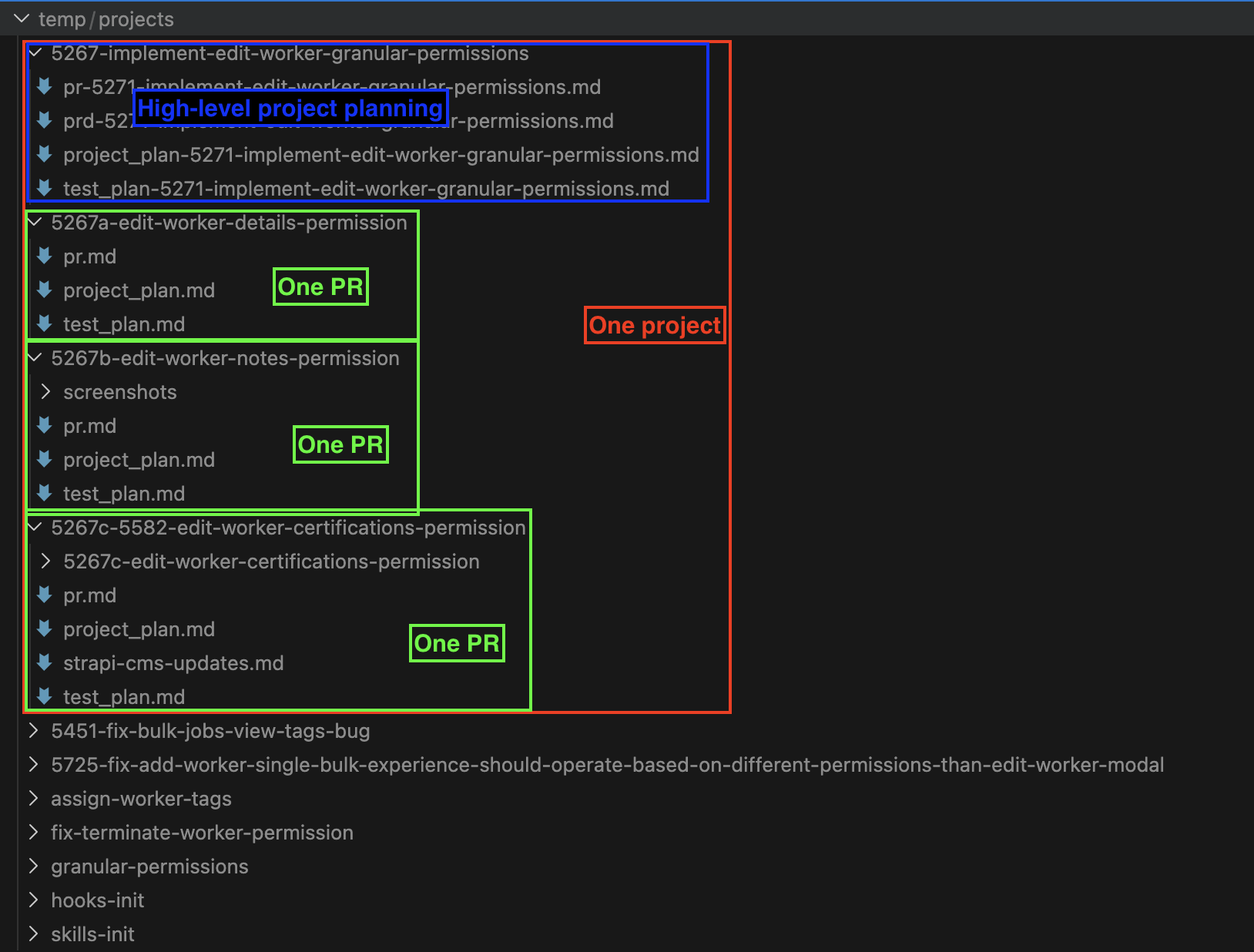

The /implement skill

- PRD + Tech Spec → Project Plan (reviewed before code is written)

- Project Plan → Test Plan: automated tests + manual testing steps

- Agent writes code, executes test plan via cURL + Claude Chrome Extension

- Screenshots → gitignored folder → referenced in pr.md

- Honest: ~25% success rate on screenshots. Worth it anyway.

- Start with /review-pr or /commit — not /implement

⑂ main

● implement-skill.md

31 / 42

Detroit, MI

1234

5678

// act-3: context engineering

The support bot agent: "re-purchased half a developer"

- Before: ticket → ~20 min understanding + ~20 min finding the code + fix time

- Now: agent triages, reproduces, proposes fix or surfaces what the engineer needs

- Uses a PILR — persistent directory of previously solved problems + index

- Result: support backlog per two-week shift dropped significantly

- Doesn't solve novel architectural bugs — good at "we've seen this pattern before"

- Why it works: the harness came first. Without CLAUDE.md, the agent just guesses.

⑂ main

● support-bot.md

32 / 42

Detroit, MI

1234

5678

// act-3: context engineering

Spec-Driven Development

- Specify → Plan → Tasks → Implement

- Expanded the "safe delegation window" from 10–20 minute tasks to multi-hour feature delivery

- The harness makes the agent reliable. SDD makes the work product predictable.

- Forces hard thinking to happen before implementation, not during it

- Claude Code's plan mode is built for this workflow

⑂ main

● sdd.md

33 / 42

Detroit, MI

1234

5678

// act-3: context engineering

4-layer review

- 1. Architectural review — human, before code is written

- 2. Automated review — /review-pr skill, SonarCloud, Copilot review, linting, tests (all in CI)

- 3. Human code review + manual QA — focused on the feature, based on test steps in the PR

- 4. Full-app UAT/regression — end of every sprint (working toward replacing with Playwright)

- Unsolved: without ephemeral envs, reviewers contend for shared test servers — gets worse as PR volume increases

⑂ main

● review.md

34 / 42

Detroit, MI

1234

5678

// act-4: multi-agent reality check

Act 4

Multi-Agent

Reality Check

Reality Check

⑂ main

● act-4.md

35 / 42

Detroit, MI

1234

5678

// act-4: multi-agent reality check

The promise: a fleet of AI collaborators

- One agent orchestrates many — background agents on separate branches in parallel

- An engineer manages a fleet, not a single assistant

- Cursor is doing this. Ramp. Stripe. Large AI-native companies.

- Orchestration platforms emerging: Coder and others — provisioning environments, coordinating agents across a codebase

⑂ main

● the-promise.md

36 / 42

Detroit, MI

1234

5678

// act-4: multi-agent reality check

Where we actually are

- ✓ GitHub Copilot cloud agents

- ✓ Support bot agent — and it's working

- ~ Running 1–3 local tasks at once — sometimes

- "I've read sensational claims from Ramp and Stripe. We don't have things tuned to that level."

- "I haven't figured out how to multiplex my brain yet."

⑂ main

● where-we-are.md

37 / 42

Detroit, MI

1234

5678

// act-4: multi-agent reality check

What actually happened when I tried

- Context debt: you need rich structured context in place — we weren't there yet

- Review bottleneck: more agents = more PRs = worse pile-up

- Cognitive load: managing multiple agent threads is a different skill, not just more of the same

- Not that the tools don't work — we hadn't earned the right to use them yet

⑂ main

● what-happened.md

38 / 42

Detroit, MI

1234

5678

// act-4: multi-agent reality check

We're in the j-curve. The dip before the improvement.

- The things that are working are working because of harness investment

- Multi-agent nirvana requires the full harness first — we're not done building it

- This is a living experiment, not a solved problem

- Anyone who tells you they've fully solved this is either Cursor or not telling the whole story

⑂ main

● honest-summary.md

39 / 42

Detroit, MI

1234

5678

// close

Monday morning

- Write a CLAUDE.md for one repo: tech stack, architecture in two paragraphs, three things you wish every new engineer knew

- Try one skill — start simple: /review-pr or /commit, not /implement

- Identify one refactor that would make your codebase more agent-navigable — put it on the roadmap

- None of this requires a BHAG or an executive mandate

⑂ main

● monday.md

40 / 42

Detroit, MI

1234

5678

// close

Further reading

- DORA AI Capabilities Model (2025) — google.com

- Harness Engineering — OpenAI (Feb 2026)

- Building an Elite AI Engineering Culture — CJ Roth (Feb 2026)

- Are AI Agents Actually Slowing Us Down? — Pragmatic Engineer (Mar 2026)

- Speed at the Cost of Quality — Carnegie Mellon (2026)

- Full blog post with all references: detroitdevelopers.com

- Superpowers plugin for Claude Code: github.com/obra/superpowers

detroitdevelopers.com/blog/agentic-coding-advanced-guide/

⑂ main

● resources.md

41 / 42

Detroit, MI

1234

5678

// close

Questions

detroitdevelopers.com

⑂ main

● questions.md

42 / 42

Detroit, MI